Getting Started - Locust

Follow this guide for a basic example of running your Locust tests on the Testable platform. Our example project will test our sample REST API.

Start by signing up and creating a new test case using the Create Test button on the dashboard.

Enter the test case name (e.g. Locust Demo) and press Next.

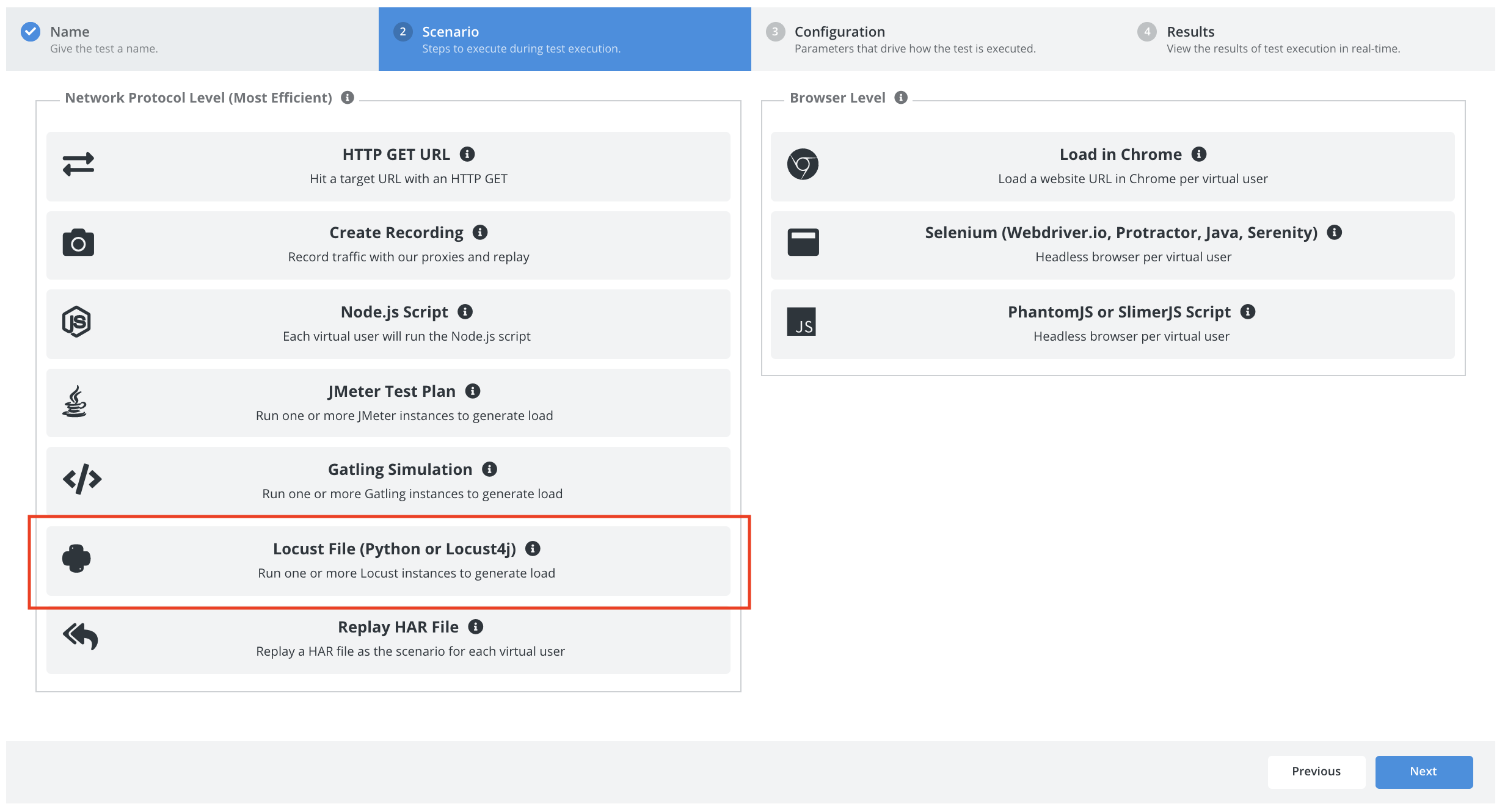

Scenario

Select Locust file as the scenario type.

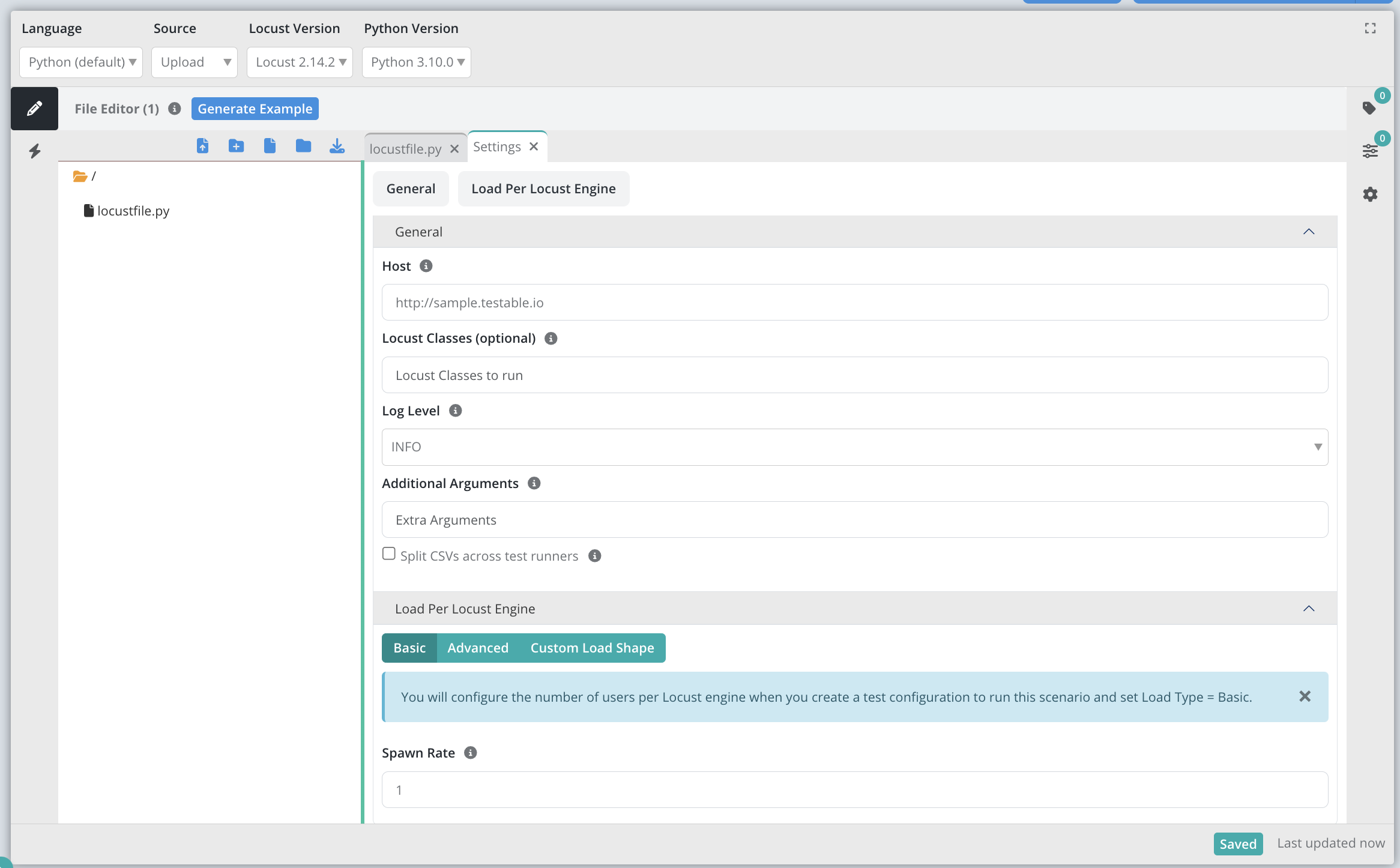

Let’s use the following options:

- Language: We will use Python.

- Source: Upload. See the detailed documentation for all the options to get our code onto Testable.

- Version: Lets use the latest version (2.14.2 as of this writing).

- Python Version: 3.10

Next press the Generate Example button to generate an example locustfile.py file.

Press in the Settings tab set the following options:

- Host: http://sample.testable.io (the

--hostargument to Locust) - Hatch Rate: 1 (the

--hatch-rateargument to Locust)

We will use the example locustfile.py file that gets populated when we pressed the Generate Example button:

from locust import HttpUser, between, task, events

class MyLocust(HttpUser):

wait_time = between(1, 3)

@task

def ibm(self):

self.client.get('/stocks/IBM')

@task

def msft(self):

self.client.get('/stocks/AAPL')

And that’s it, we’ve now defined our scenario! To try it out before configuring a load test click the Smoke Test button in the upper right and watch Testable execute the scenario with 1 concurrent user. You should see all results including logging, network traces, etc appear in real time as the smoke test runs on one of our shared test runners.

Next, click on the Configuration tab or press the Next button at the bottom to move to the next step.

Configuration

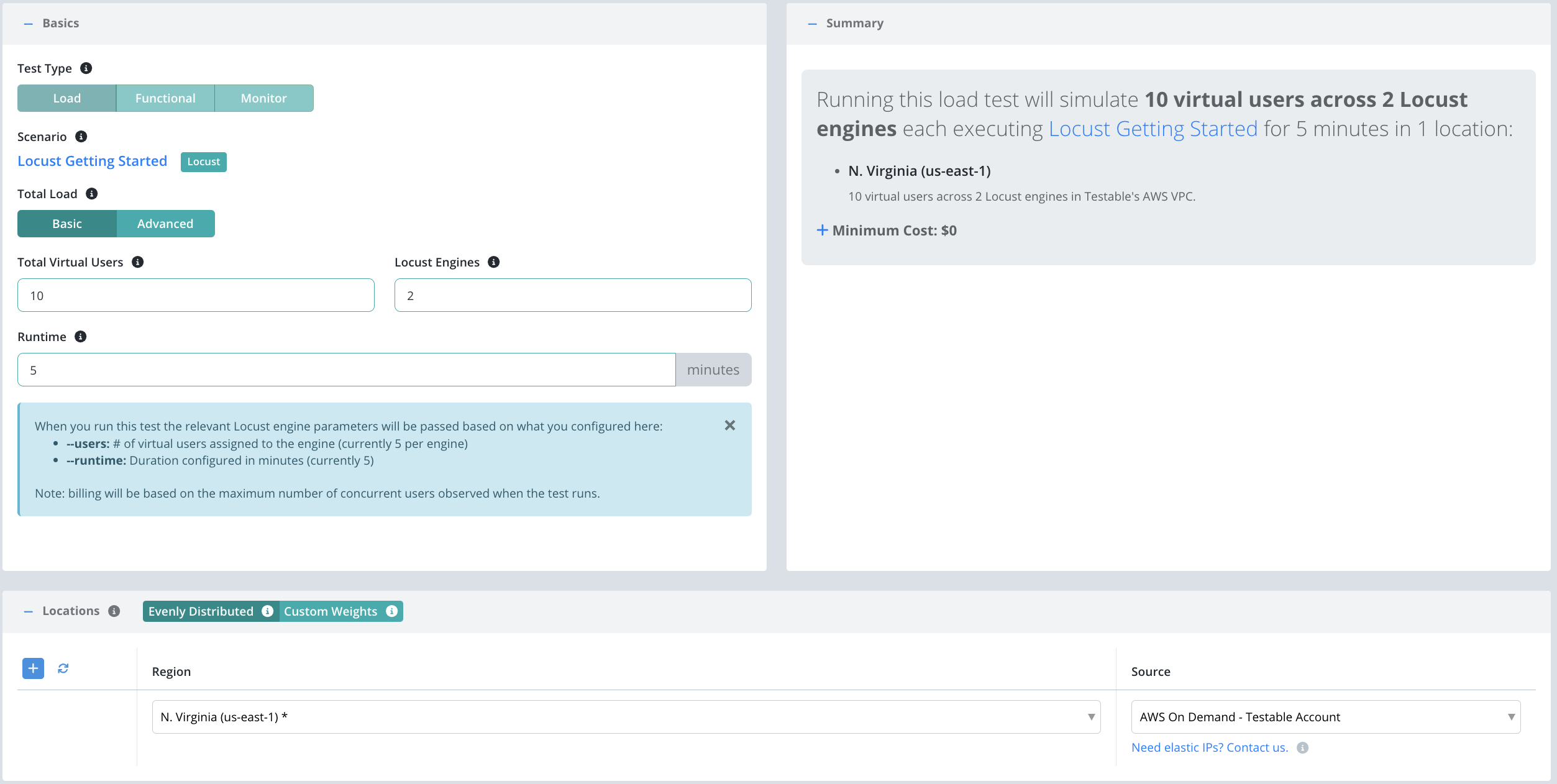

Now that we have the scenario for our test case we need to define a few parameters before we can execute our test:

- Total Load: We will choose

Basichere and specify the total virtual users, # of Locust engines, and runtime via the Testable UI and Testable will pass the right value for--users/--runtimeto each Locust engine. Alternatively, chooseAdvancedand simply specify how many Locust engines to spin up with the load parameters either hard-coded or configured via scenario params. - Location(s): Choose the location in which to run your test and the test runner source that indicates which test runners to use in that location to run the load test (e.g. on the public shared grid).

And that’s it! Press Start Test and watch the results start to flow in. See the new configuration guide for full details of all configuration options.

For the sake of this example, let’s use the following parameters:

View Results

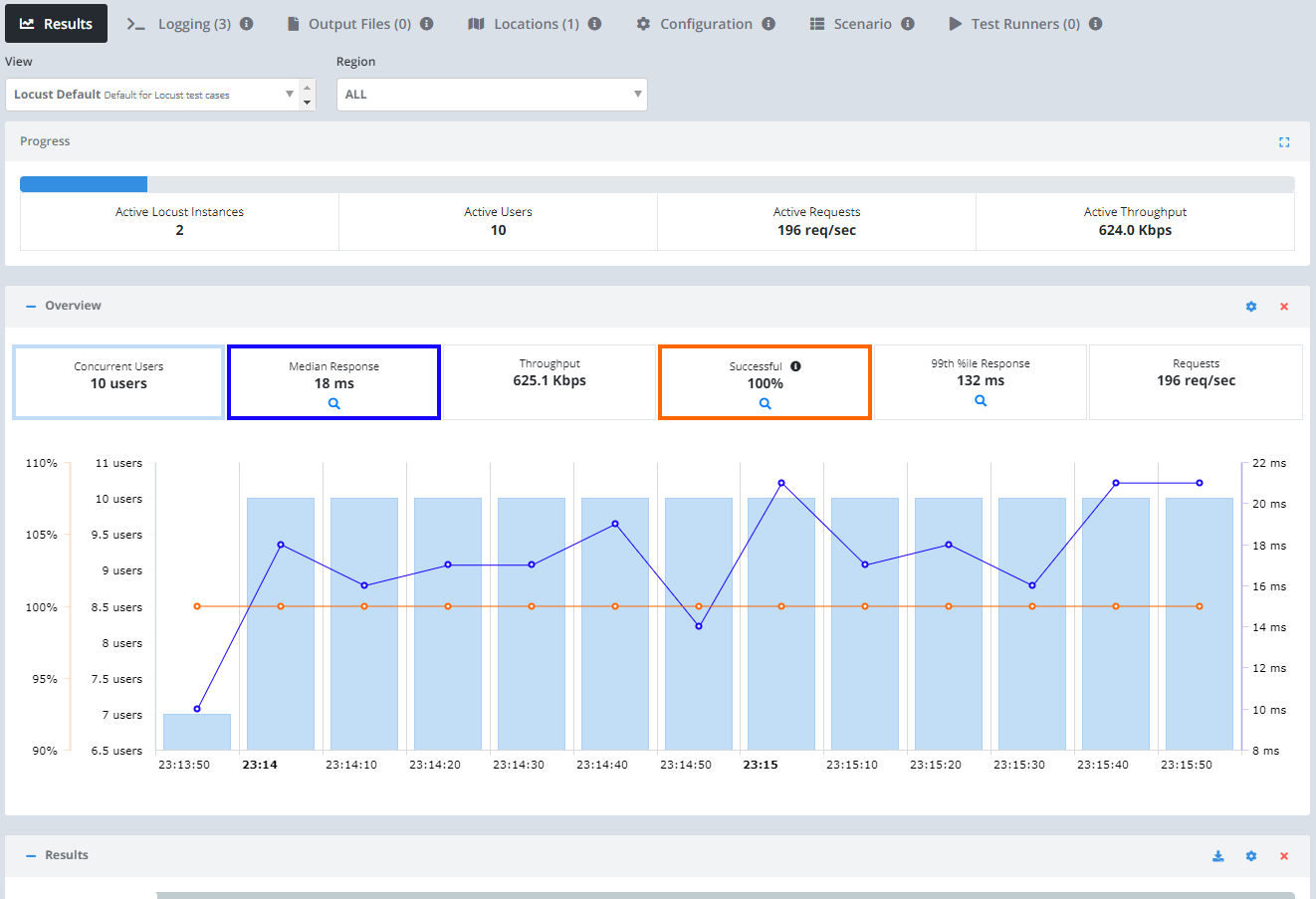

Once the test starts executing, Testable will distribute the work out to the selected test runners (e.g. Public Shared Grid in AWS N. Virginia).

In each region, the test runners execute 2 separate Locust instances concurrently. The results will include traces, performance metrics, logging, breakdown by URL, analysis, comparison against previous test runs, and more.

Check out the Locust guide for more details on running your Locust tests on the Testable platform.

We also offer integration (Org Management -> Integration) with third party tools like New Relic. If you enable integration you can do more in depth analytics on your results there as well.

That’s it! Go ahead and try these same steps with your own scripts and feel free to contact us with any questions.